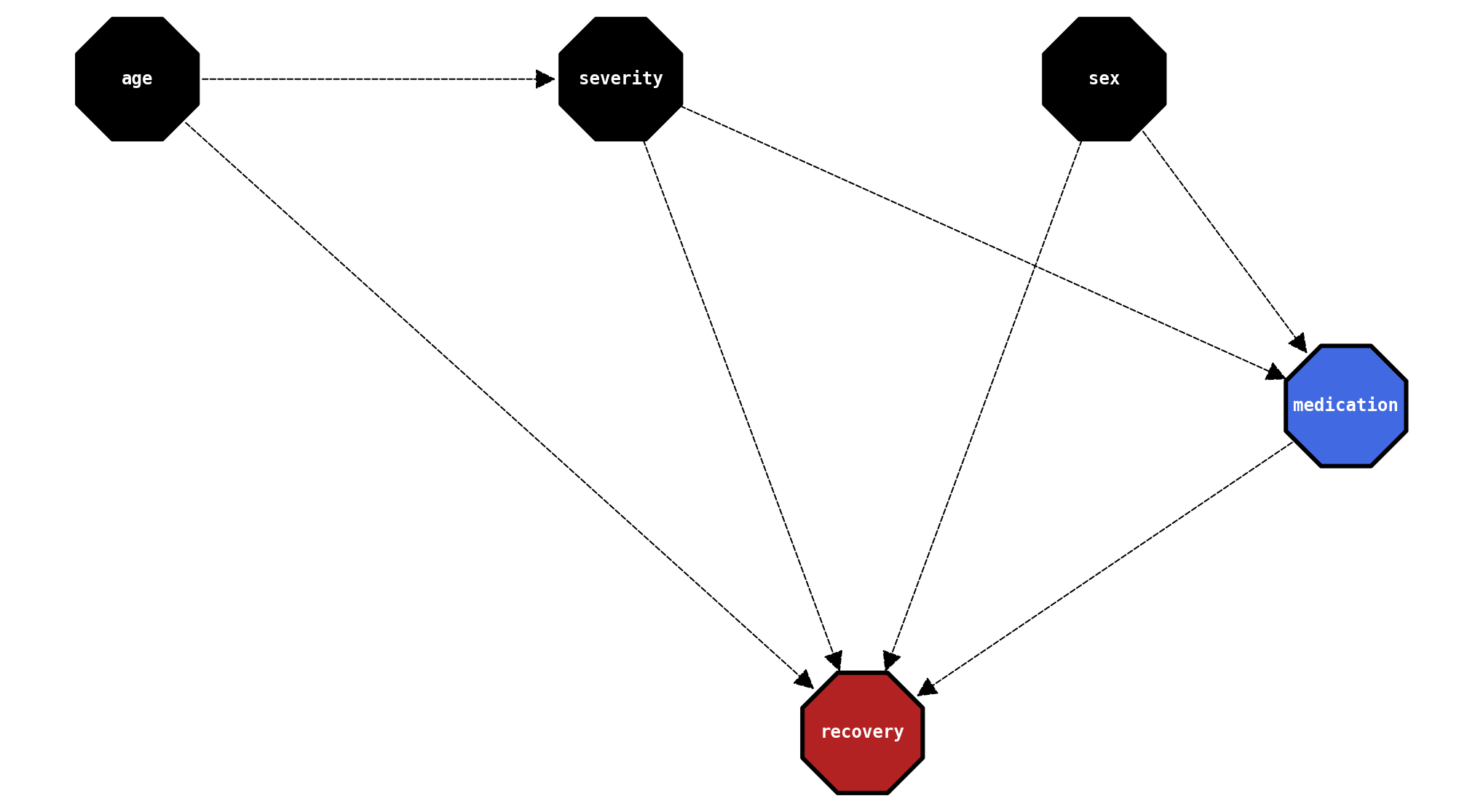

The features and shortcomings of Bayesian Networks (with CausalNex)

Benchmarking Bayesian Networks (CausalNex) on a synthethic causal inference dataset

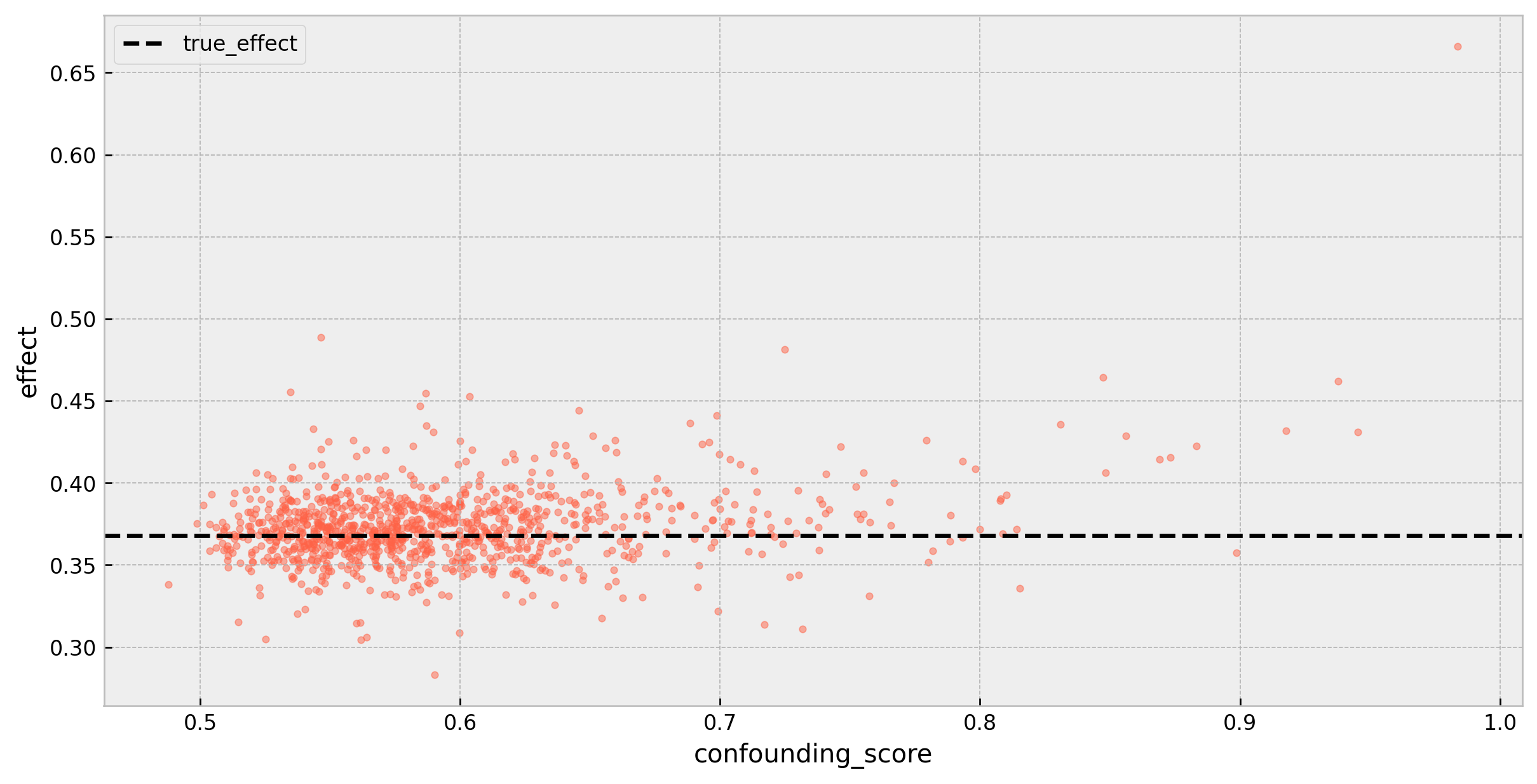

Diagnosing validity of causal effects on decision trees

Diagnosing confounding on leaf nodes of decision trees

Solving the mystery of my dog's breed with ML

Using the Stanford Dogs Dataset, deep learning, and explainability through prototypes to infer the unknown breed of my dog

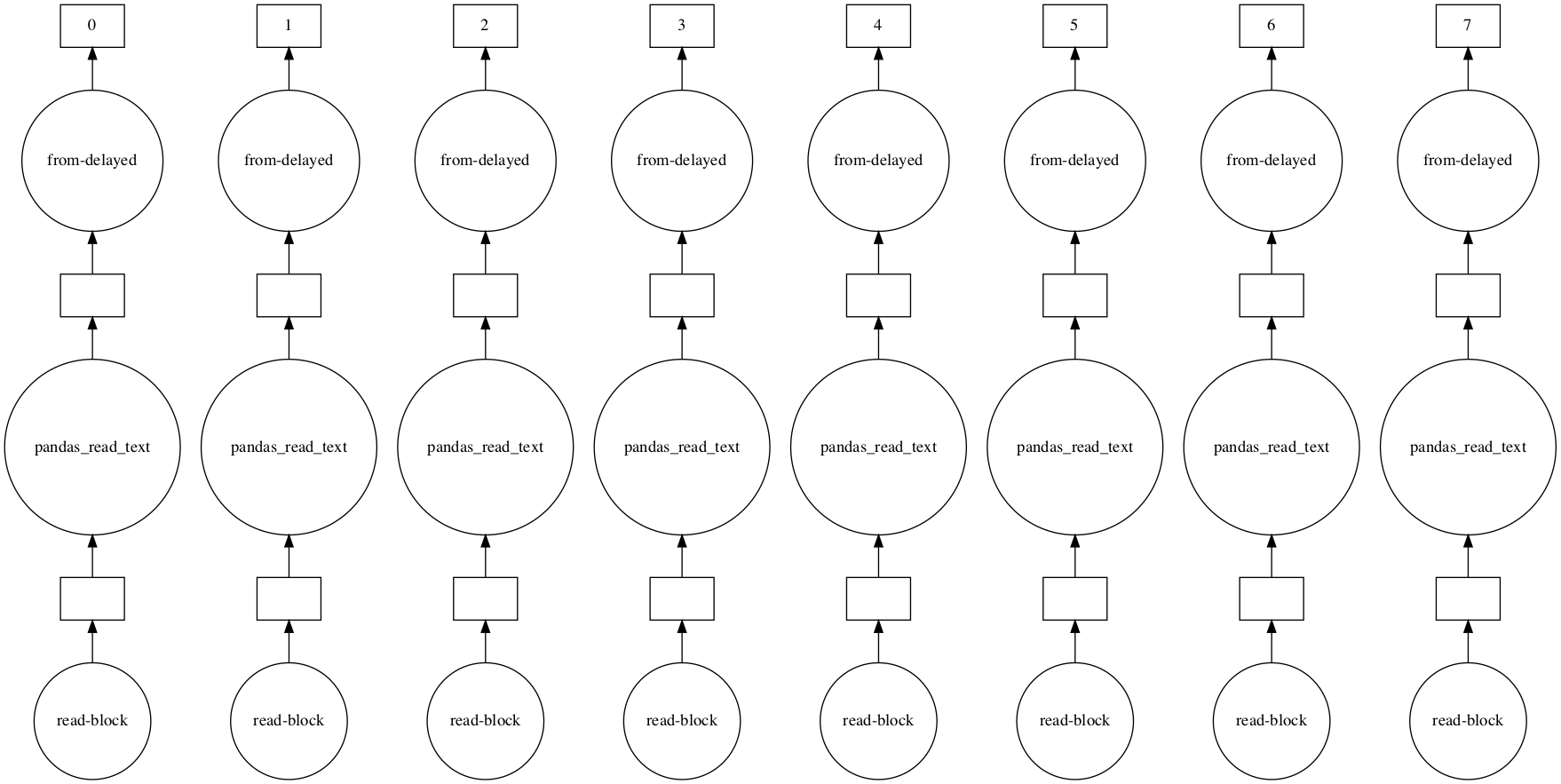

Training models when data doesn't fit in memory

Using Dask and some other tricks so you can train your models under memory constraints

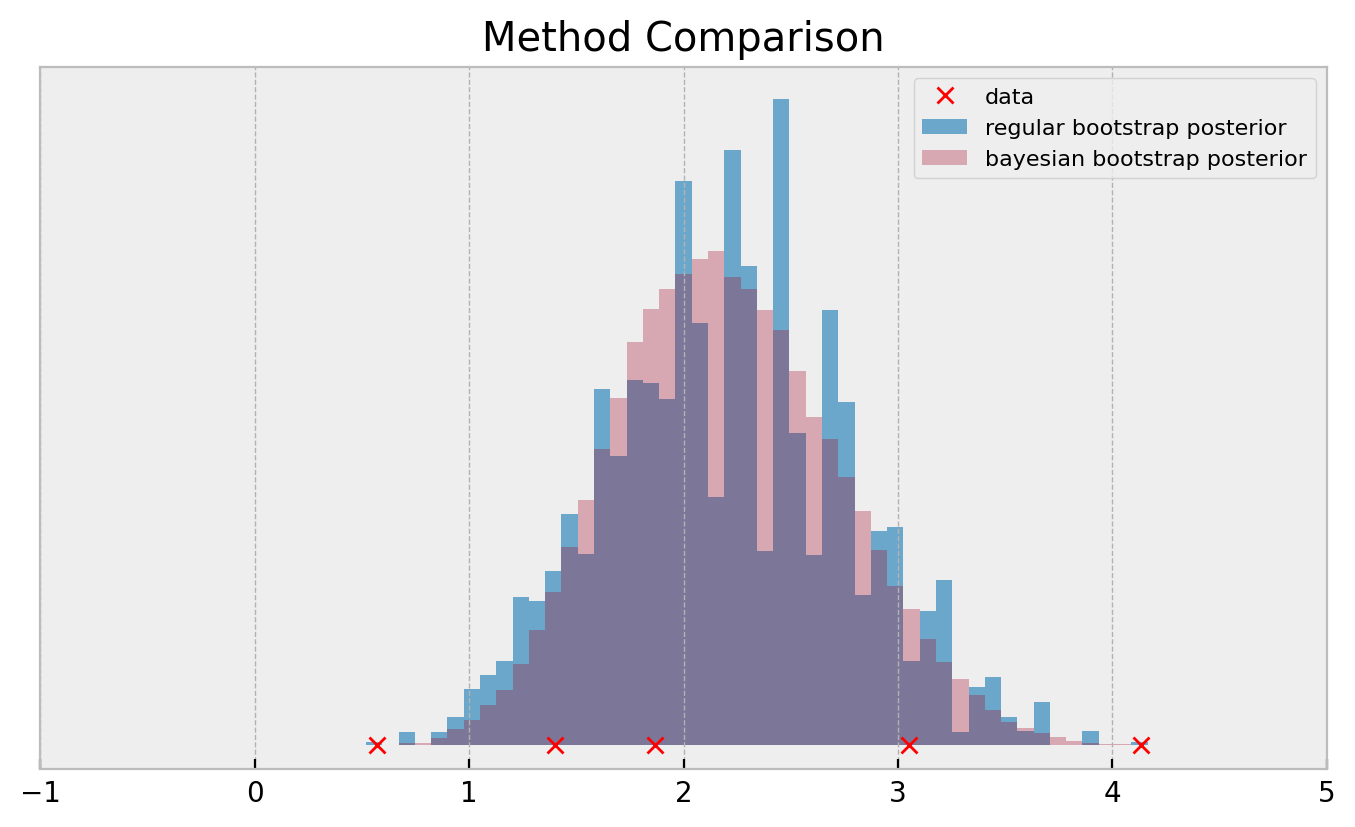

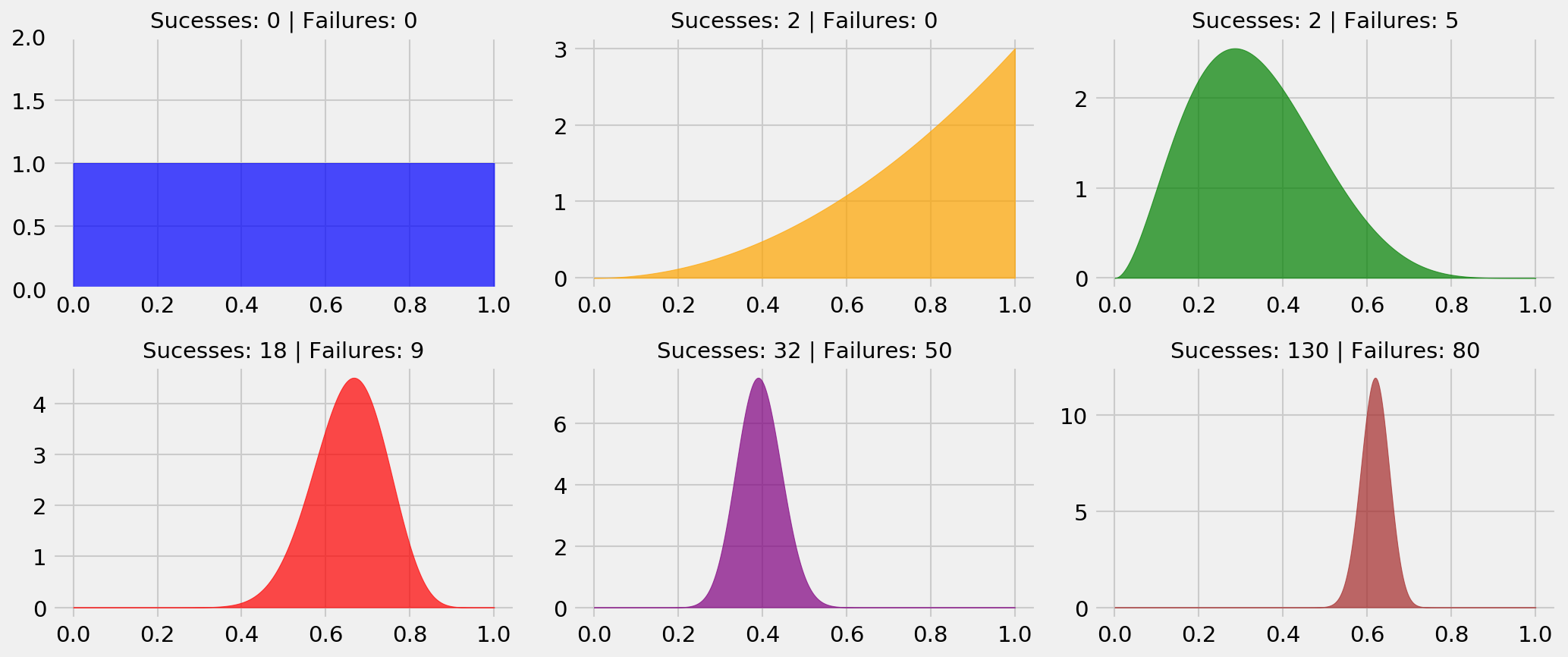

The Bayesian Bootstrap

Faster, smoother version of the bootstrap that yields better results on small data

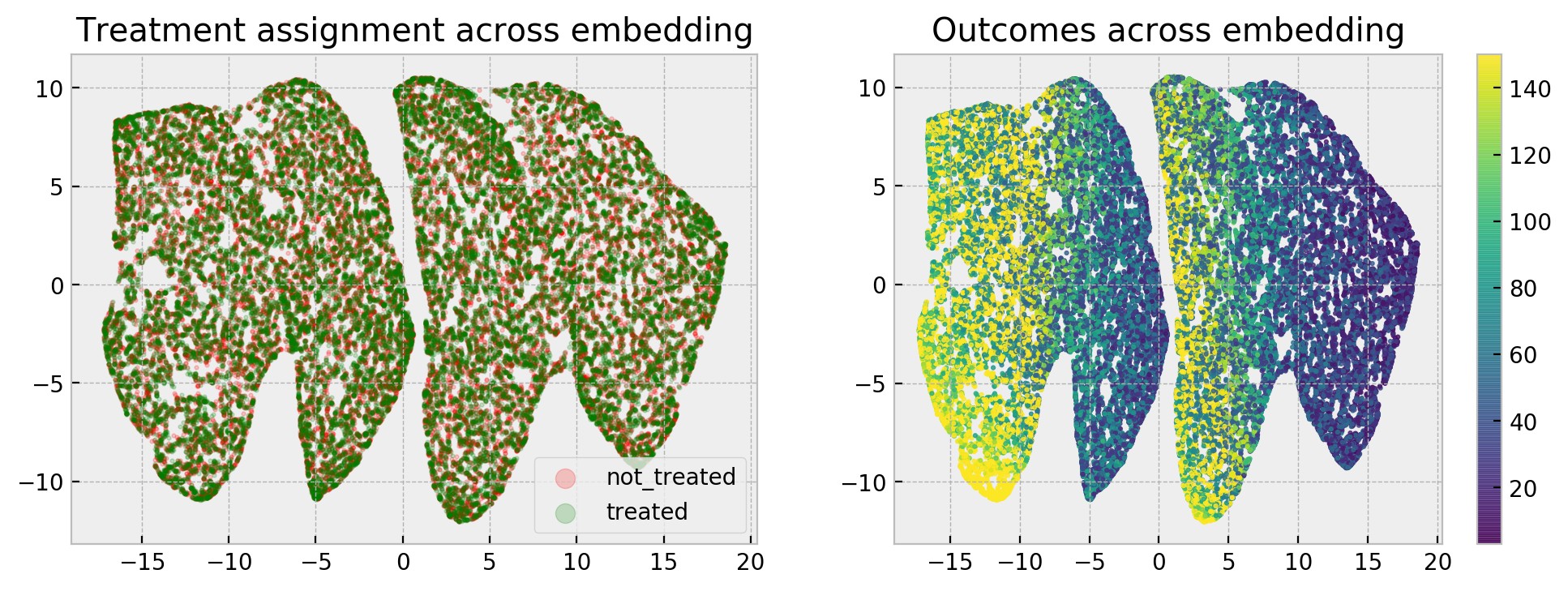

Calculating counterfactuals with random forests

Another tree-based causal inference model using nearest neighbors on the embedding produced by a Random Forest

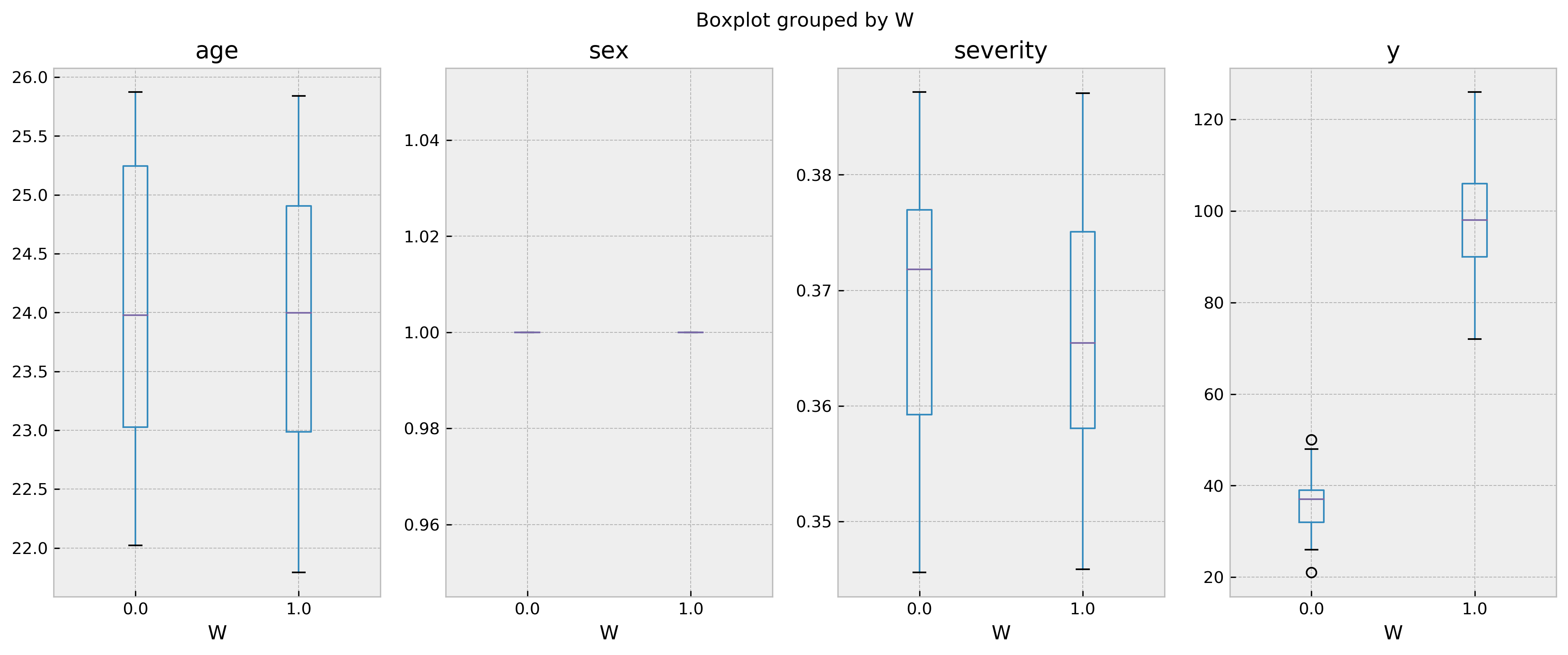

Calculating counterfactuals with decision trees

Decision Trees can be decent causal inference models, with a few tweaks

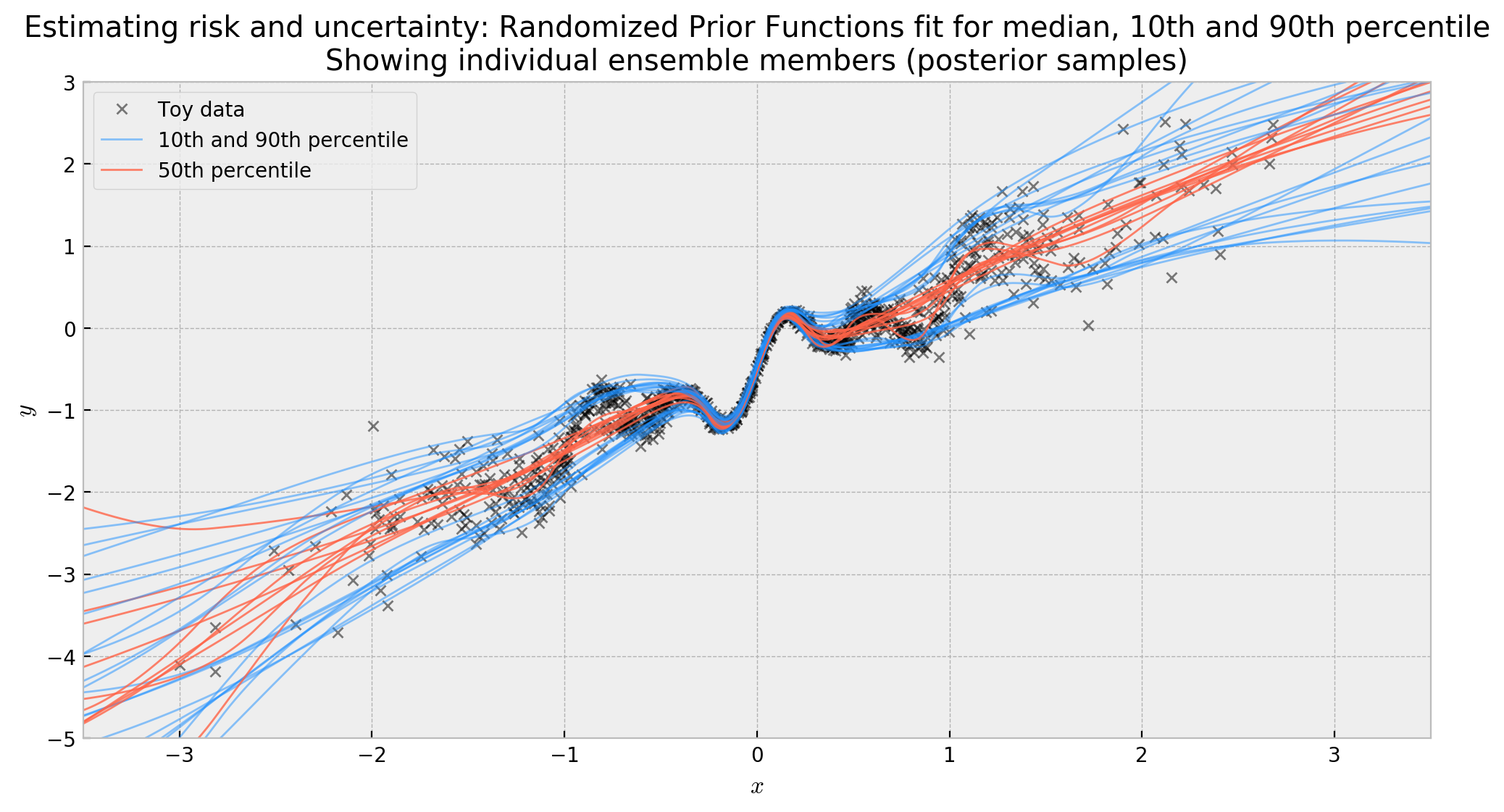

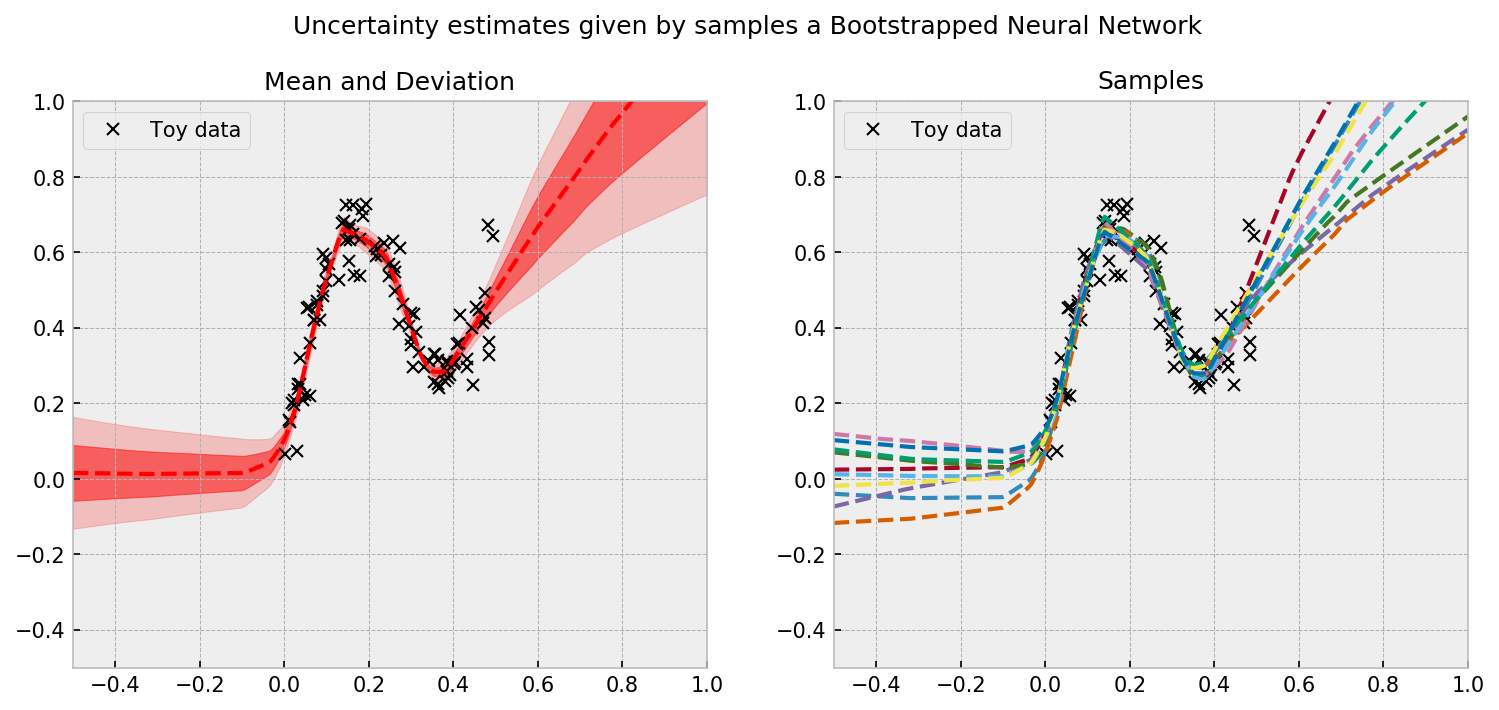

Risk and Uncertainty in Deep Learning

Building a neural network that can estimate aleatoric and epistemic uncertainty at the same time

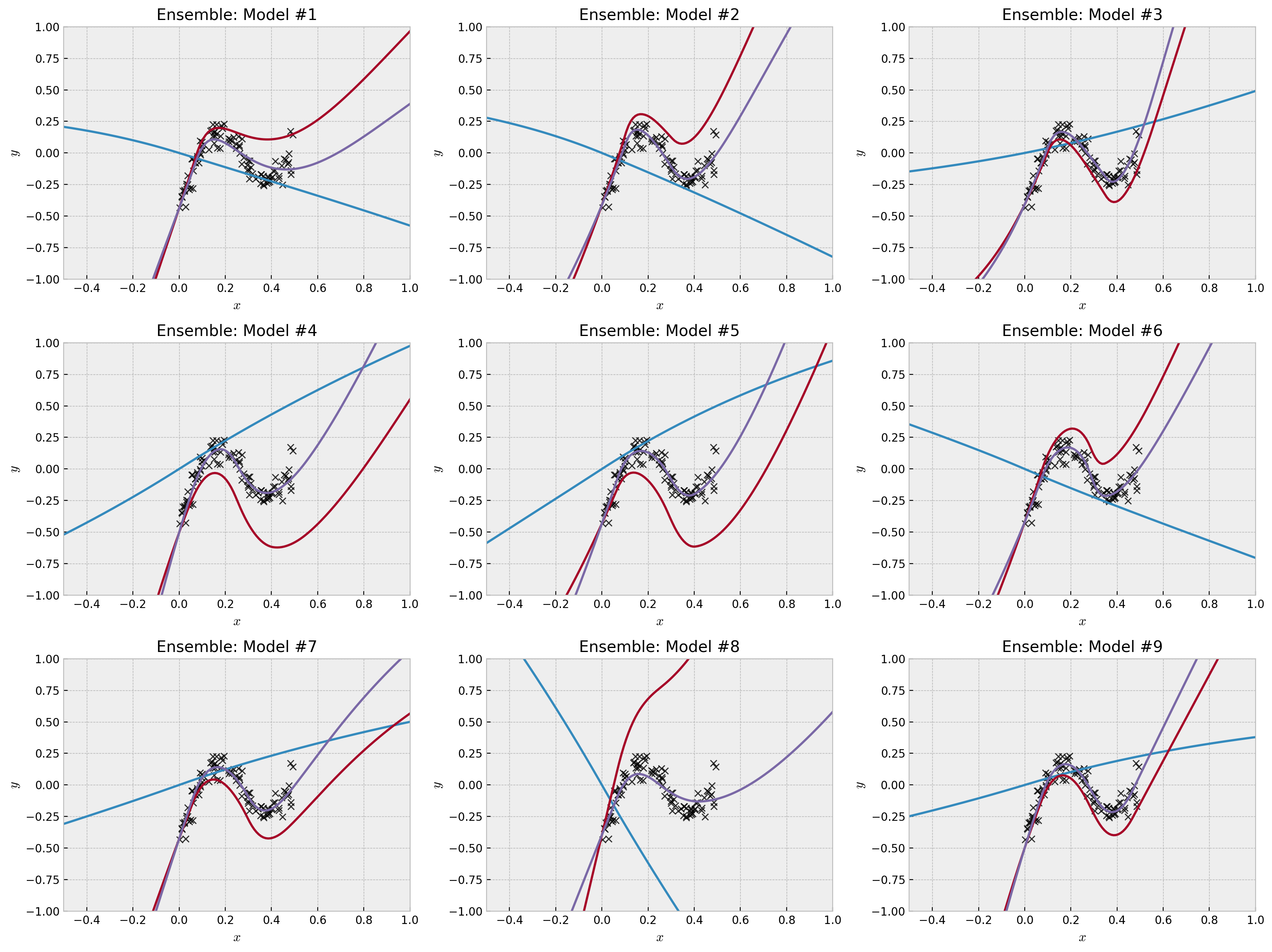

A Practical Introduction to Randomized Prior Functions

Understanding a state-of-the-art bayesian deep learning method with Keras code

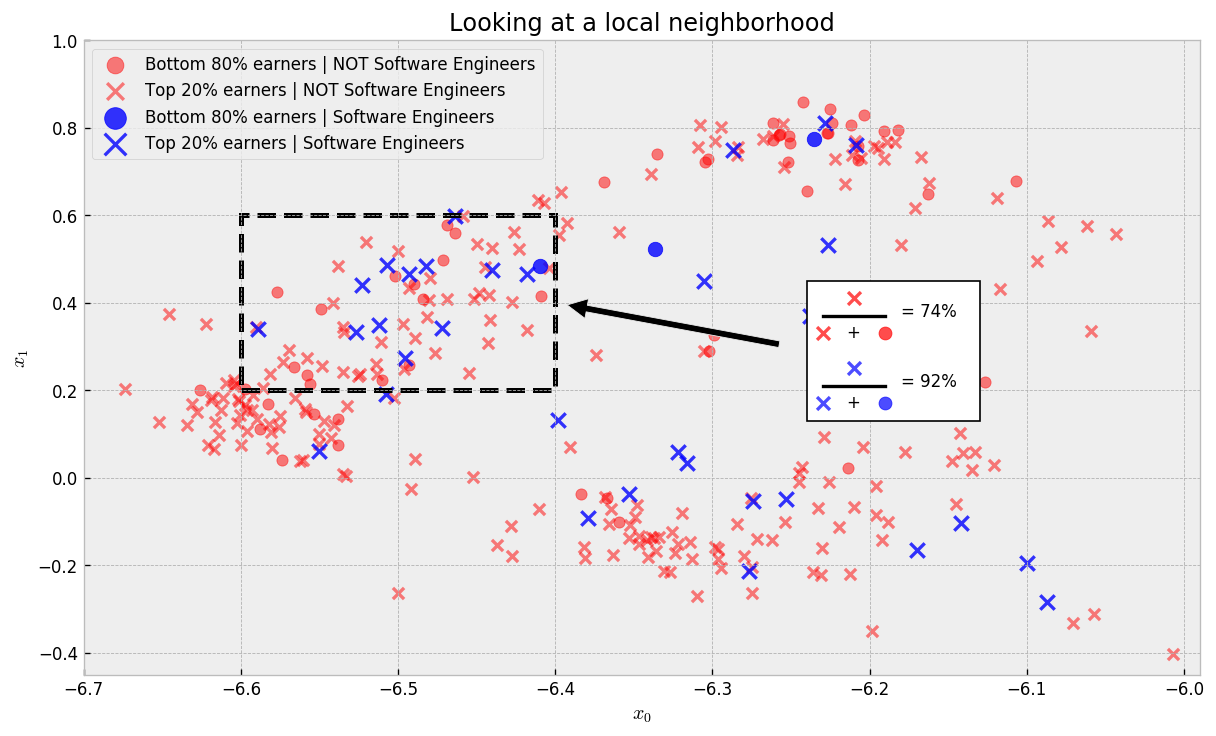

A Causal Look At What Makes a Kaggler Valuable

Using causal inference to determine what titles and skills will make you earn more

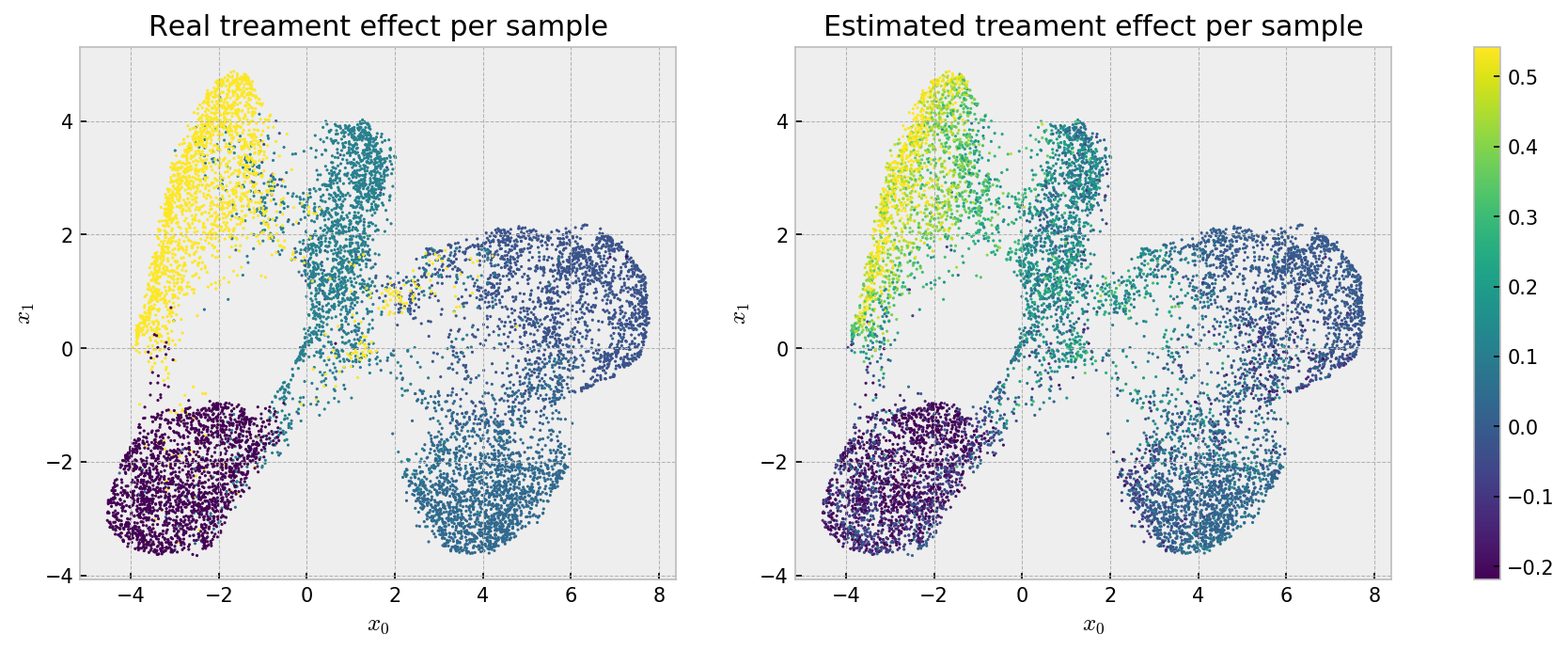

Causal inference and treatment effect estimation

Estimating counterfactual outcomes using Generalized Random Forests, ExtraTrees and embeddings

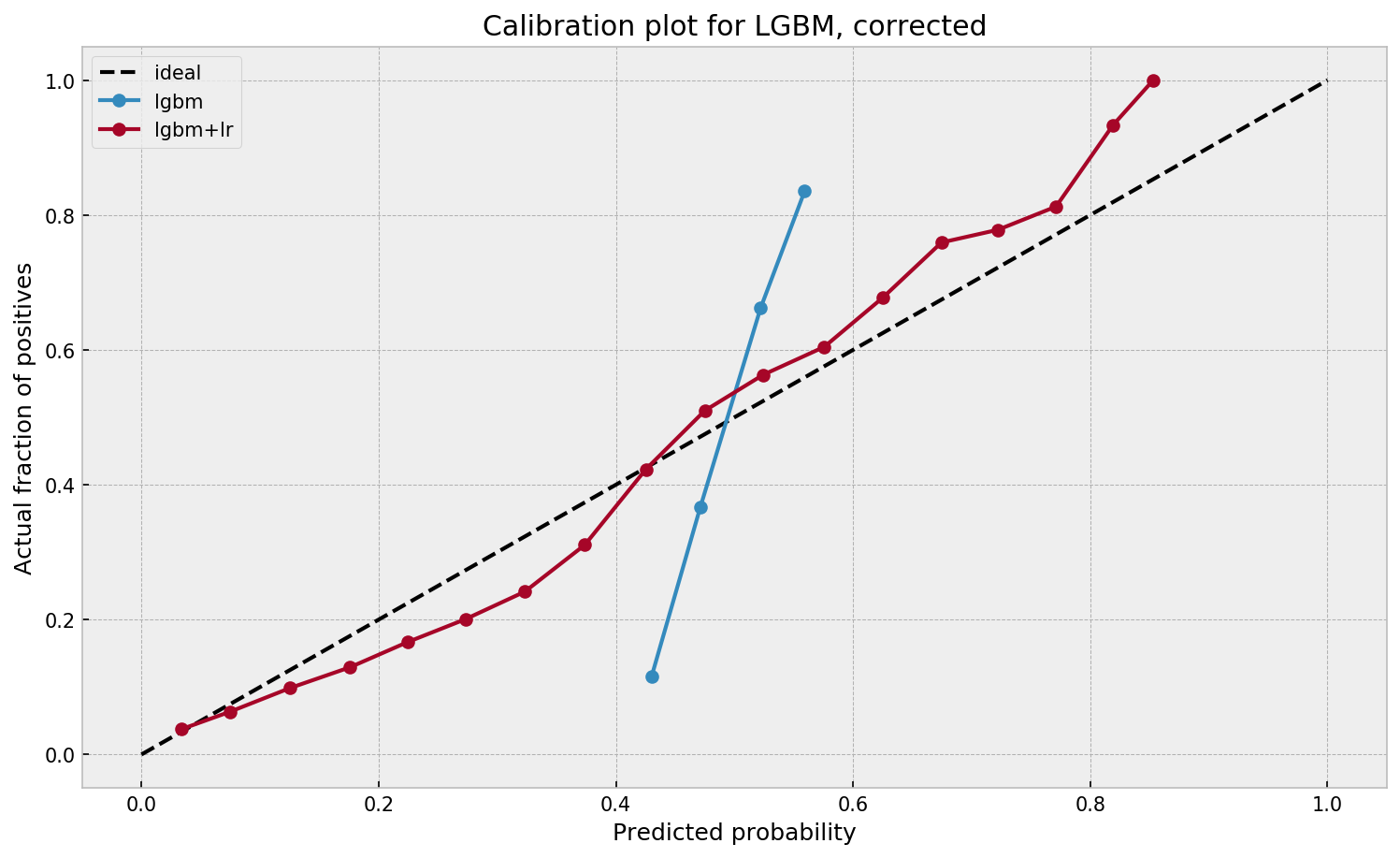

Calibration of probabilities for tree-based models

Calibrating probabilities of Random Forests and Gradient Boosting Machines with no loss of performance with a stacked logistic regression

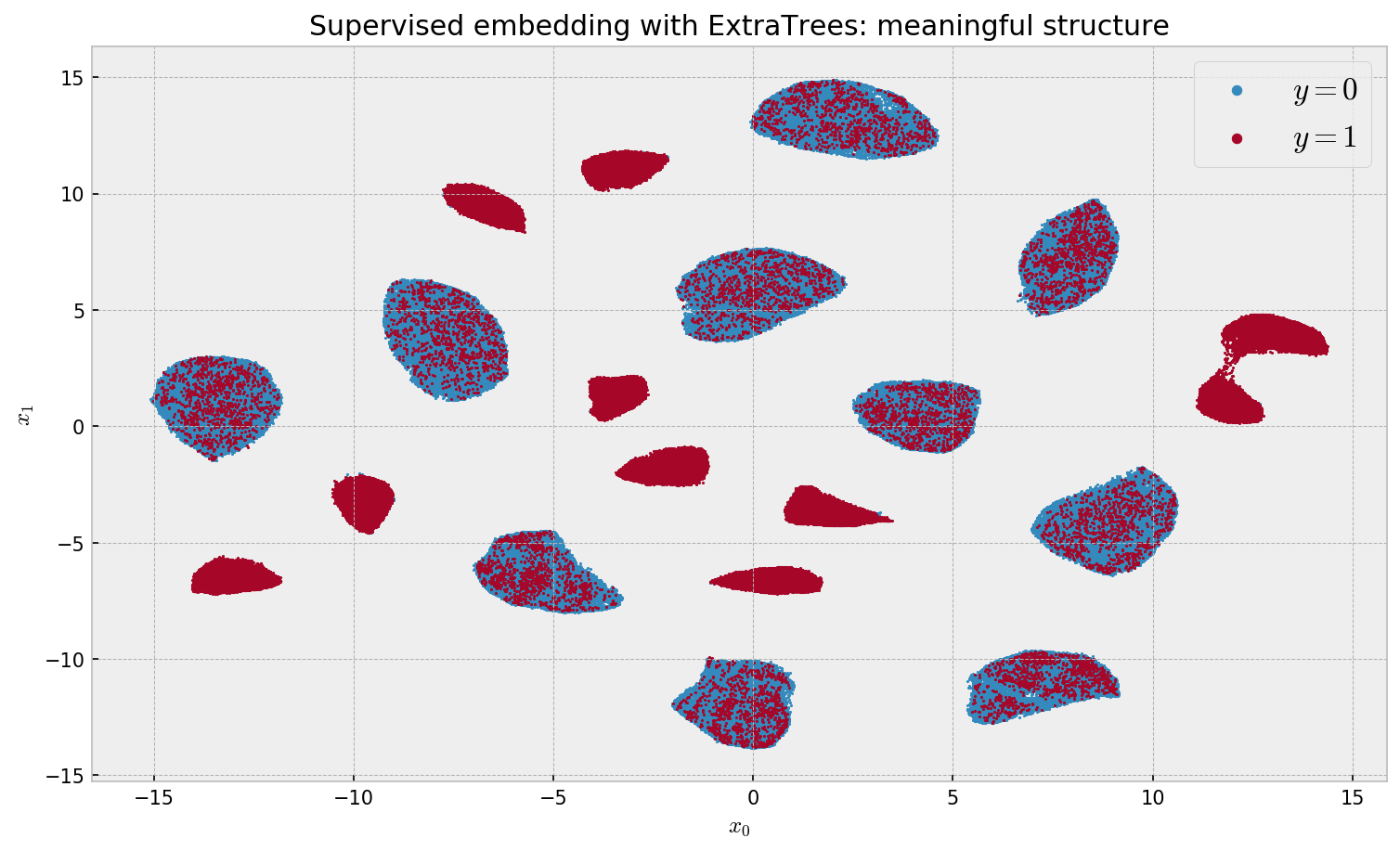

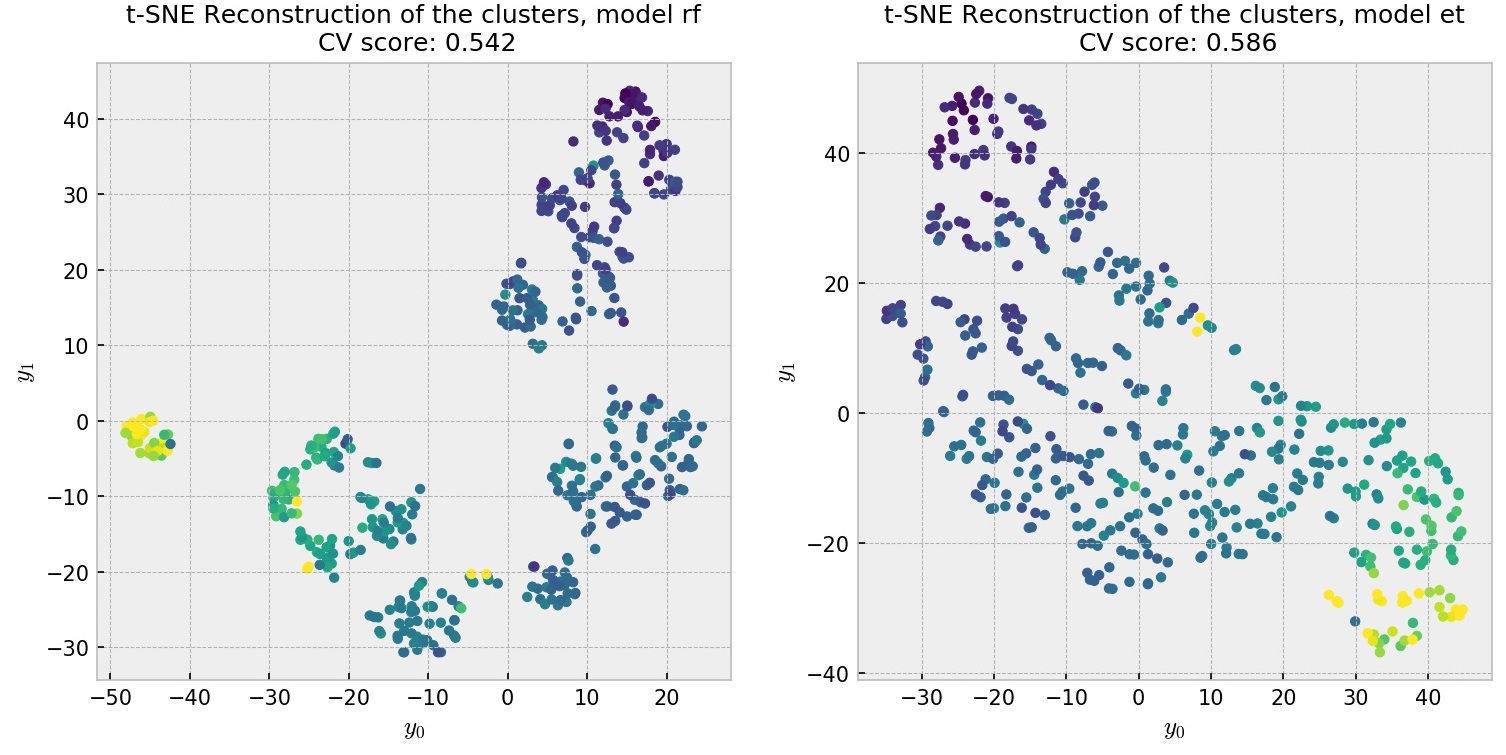

Supervised dimensionality reduction and clustering at scale with RFs with UMAP

Uncovering relevant structure and visualizing it at scale by partnering Extremely Randomized Trees and UMAP

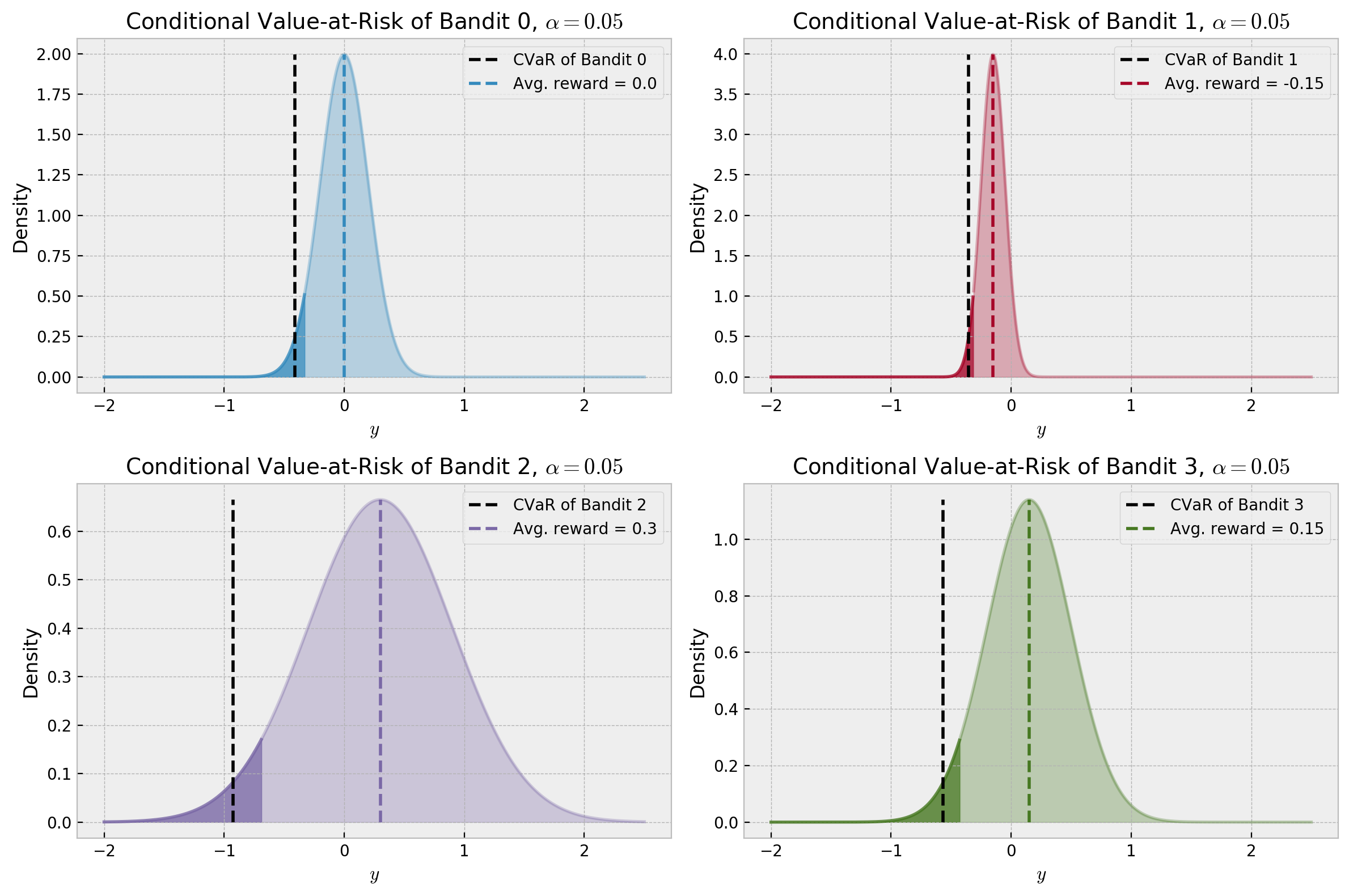

Risk-aware bandits

Experimenting with risk-aware reward signals, Thompson Sampling, Bayesian UCB, and the MaRaB algorithm

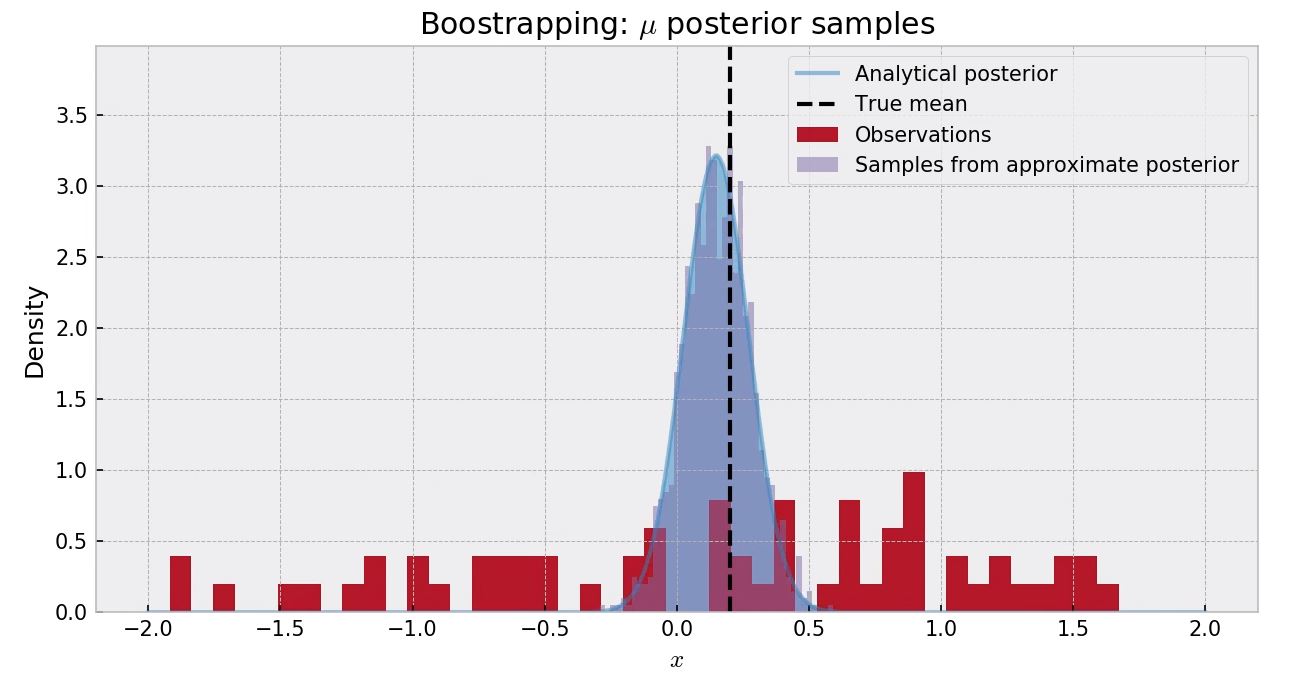

Approximate bayesian inference for bandits

Experimenting with Conjugate Priors, MCMC Sampling, Variational Inference and Bootstrapping to solve a Gaussian Bandit problem

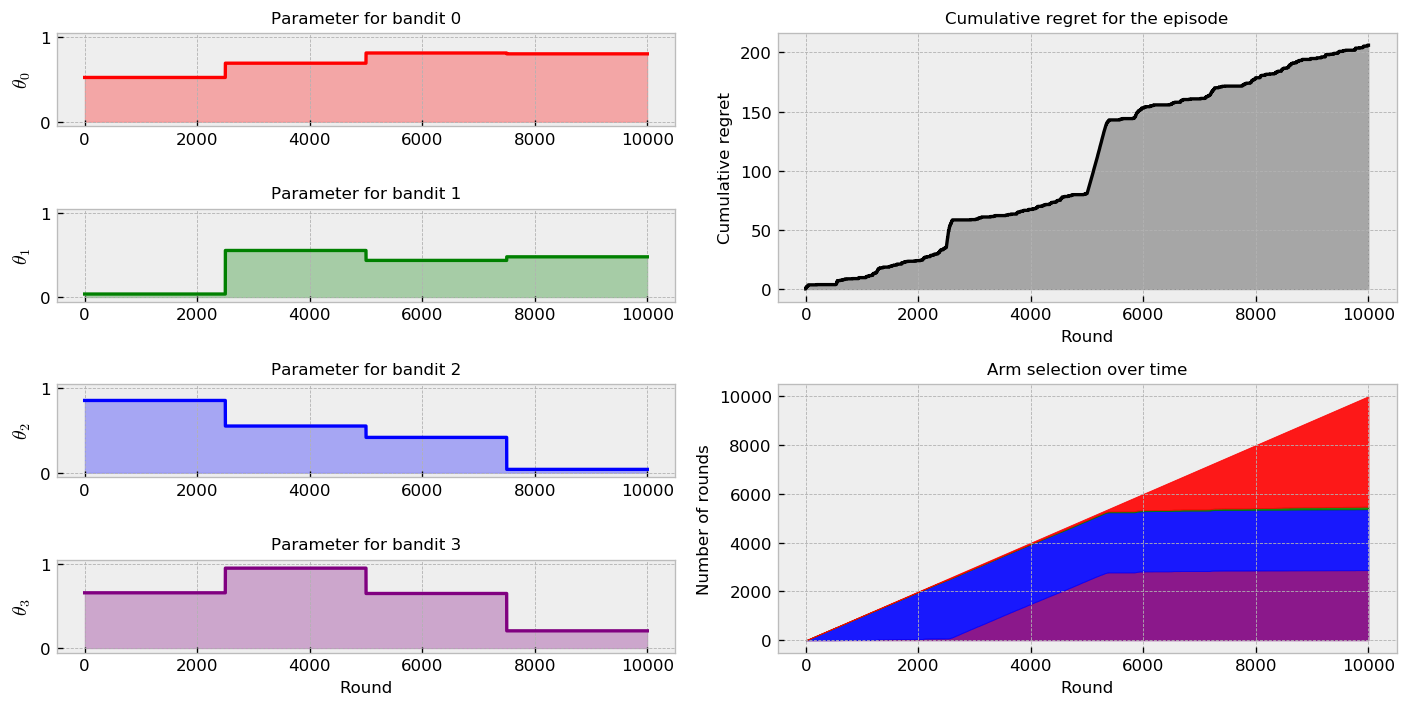

Non-stationary bandits

Solving a Bernoulli Multi-Armed Bandit problem where reward probabilities change over time

Supervised clustering and forest embeddings

Using forests of randomized trees to uncover structure that really matters in messy data

Bootstrapped Neural Networks, RFs, and the Mushroom bandit

Bootstrapped Neural Networks and Random Forests for solving a more realistic contextual bandit problem

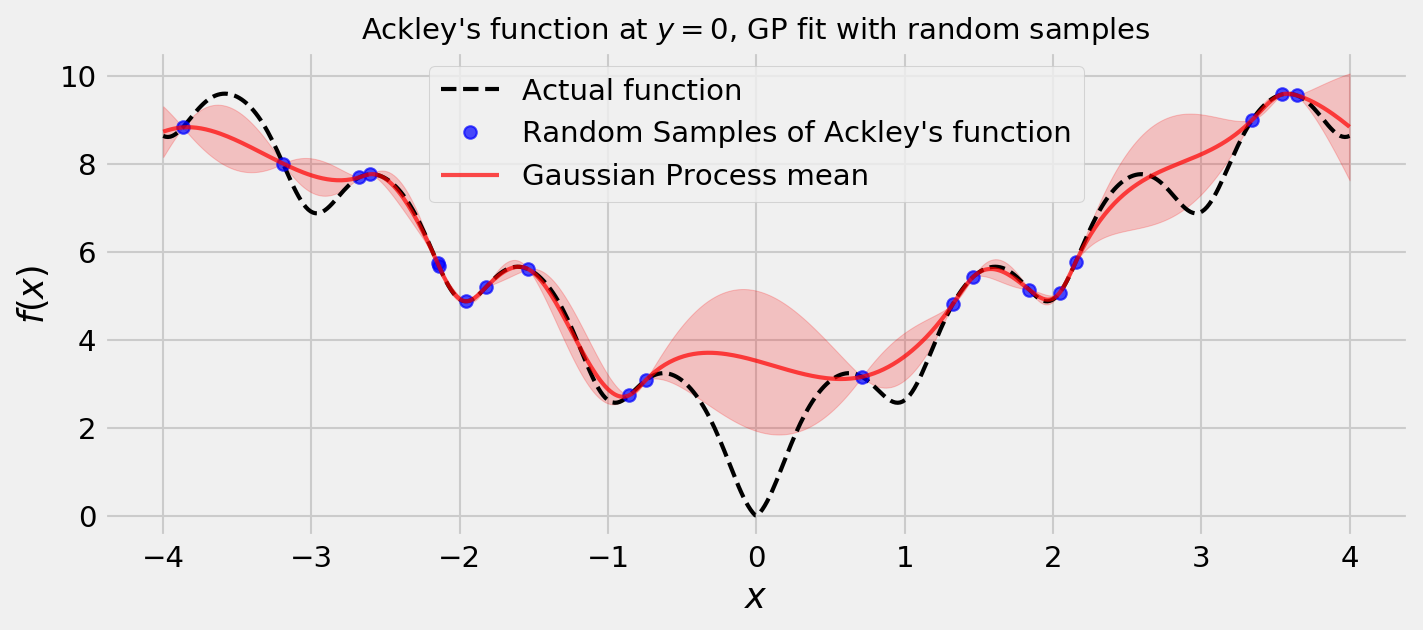

Thompson Sampling, GPs, and Bayesian Optimization

Mixing Thompson Sampling and Gaussian Processes to optimize non-convex and non-differentiable objective functions

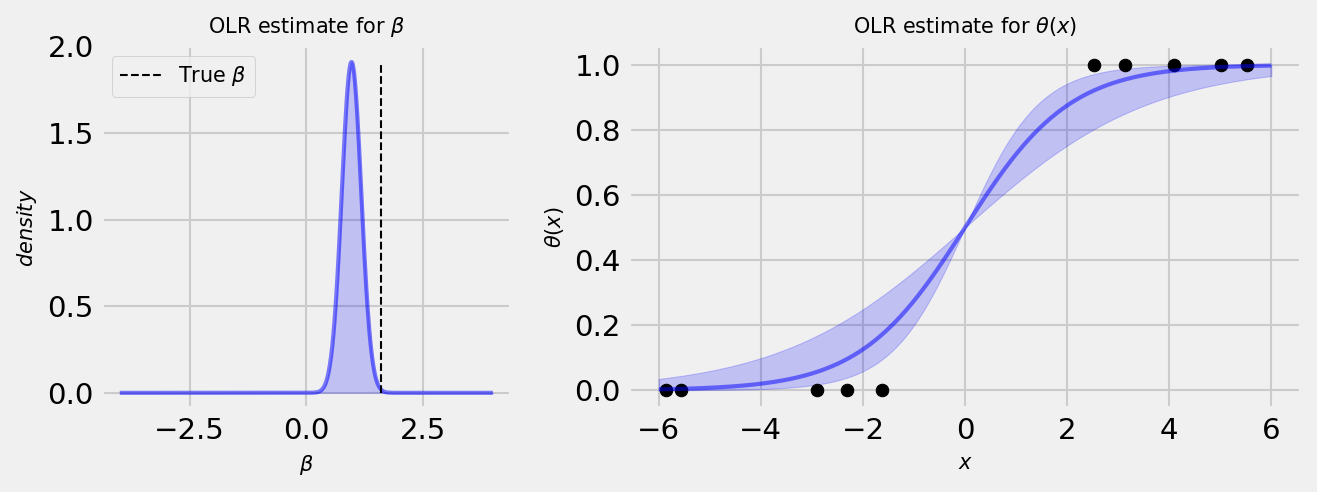

Thompson Sampling for Contextual bandits

Solving a Contextual bandit problem with Bayesian Logistic Regression and Thompson Sampling

Introduction to Thompson Sampling: the Bernoulli bandit

Introducing Thompson Sampling and comparing it to the Upper Confidence Bound and epsilon-greedy strategies in a simple problem